NZ Media News

Back to latest

AI Leadership Security Under Scrutiny Following Attack on OpenAI CEO

An incident involving an alleged Molotov cocktail attack on OpenAI CEO Sam Altman's residence highlights growing tensions and potential security risks surrounding prominent figures in the AI industry. This event underscores the intense public sentiment and scrutiny directed at AI development, which could influence its future trajectory and adoption globally.

What Happened

- •A 20-year-old man was arrested for allegedly throwing a Molotov cocktail at Sam Altman's San Francisco home on 10 April 2026.

- •The incident occurred early Friday morning and was captured by surveillance cameras.

- •Later that day, a suspect matching the description was reportedly seen making threats near OpenAI's offices.

- •The San Francisco Standard reported on the arrest, citing San Francisco police.

Why It Matters for NZ Marketers

- •Security concerns for key AI leaders could impact the stability and direction of global AI development, affecting NZ's access to cutting-edge tools.

- •Heightened public scrutiny and potential backlash against AI could influence regulatory environments, including those being considered in New Zealand.

- •The incident signals a growing emotional response to AI's societal impact, which NZ marketers must understand when communicating AI-powered solutions.

- •Disruptions to major AI companies' leadership could delay or alter product roadmaps, impacting NZ businesses relying on these technologies.

Strategic Implications

- •Marketers should prepare for potential shifts in public perception of AI, adapting messaging to address ethical concerns and security implications.

- •Companies integrating AI must consider the broader societal context and potential for public discontent, ensuring transparent and responsible deployment.

- •NZ businesses should diversify their AI partnerships where possible, mitigating risks associated with reliance on a single, potentially unstable, provider.

- •Brands leveraging AI should proactively communicate the benefits while also acknowledging and addressing potential risks, fostering trust with consumers.

Future Trend Signals

- •Increased security measures and personal protection for high-profile AI developers and executives.

- •Growing public activism, both positive and negative, surrounding the development and deployment of advanced AI.

- •Potential for greater regulatory pressure on AI companies to address societal concerns and mitigate risks.

- •A shift towards more decentralised or open-source AI development to reduce single points of failure or attack.

Sources

Editorial note: This analysis is original, AI-assisted editorial content. All source material is attributed with links. No full articles are reproduced. Short excerpts are used under fair dealing principles.

Related Analysis

More posts sharing similar topics

AI & CommercePolitics

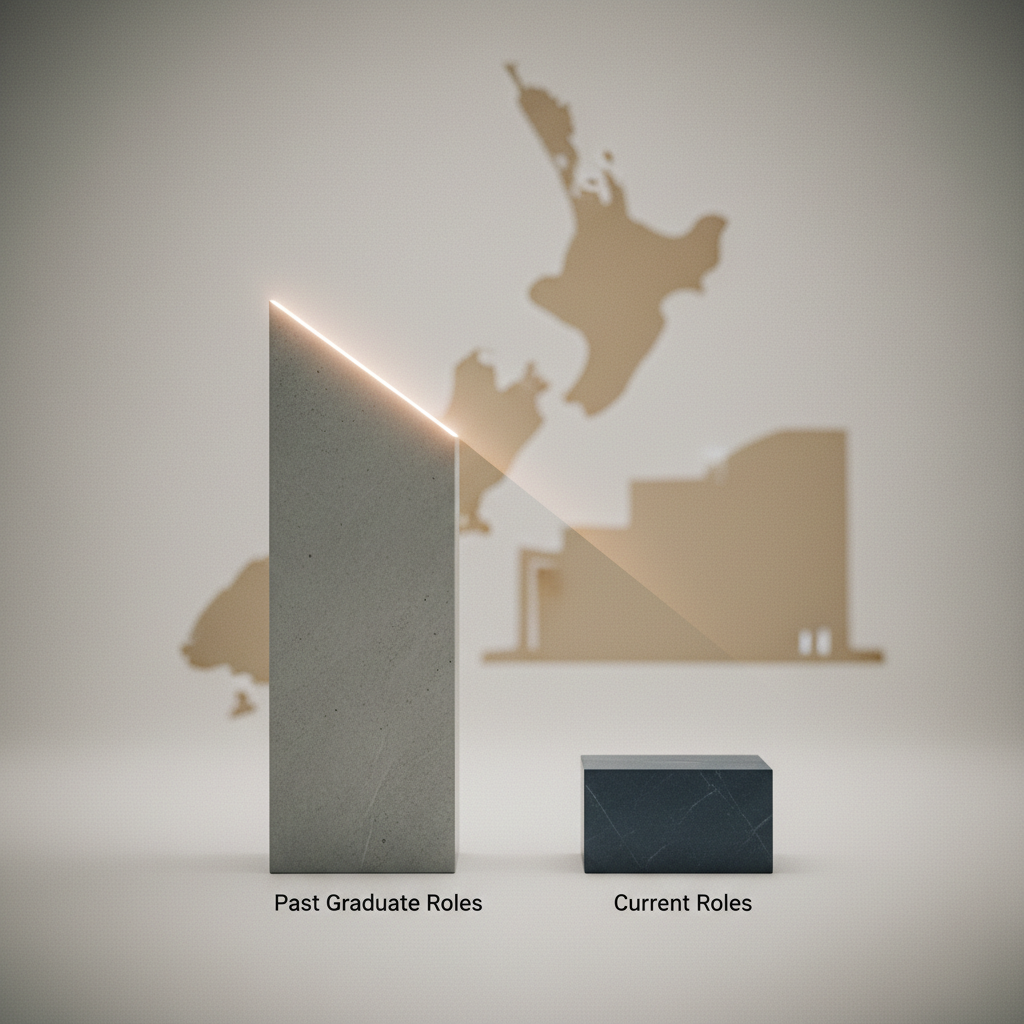

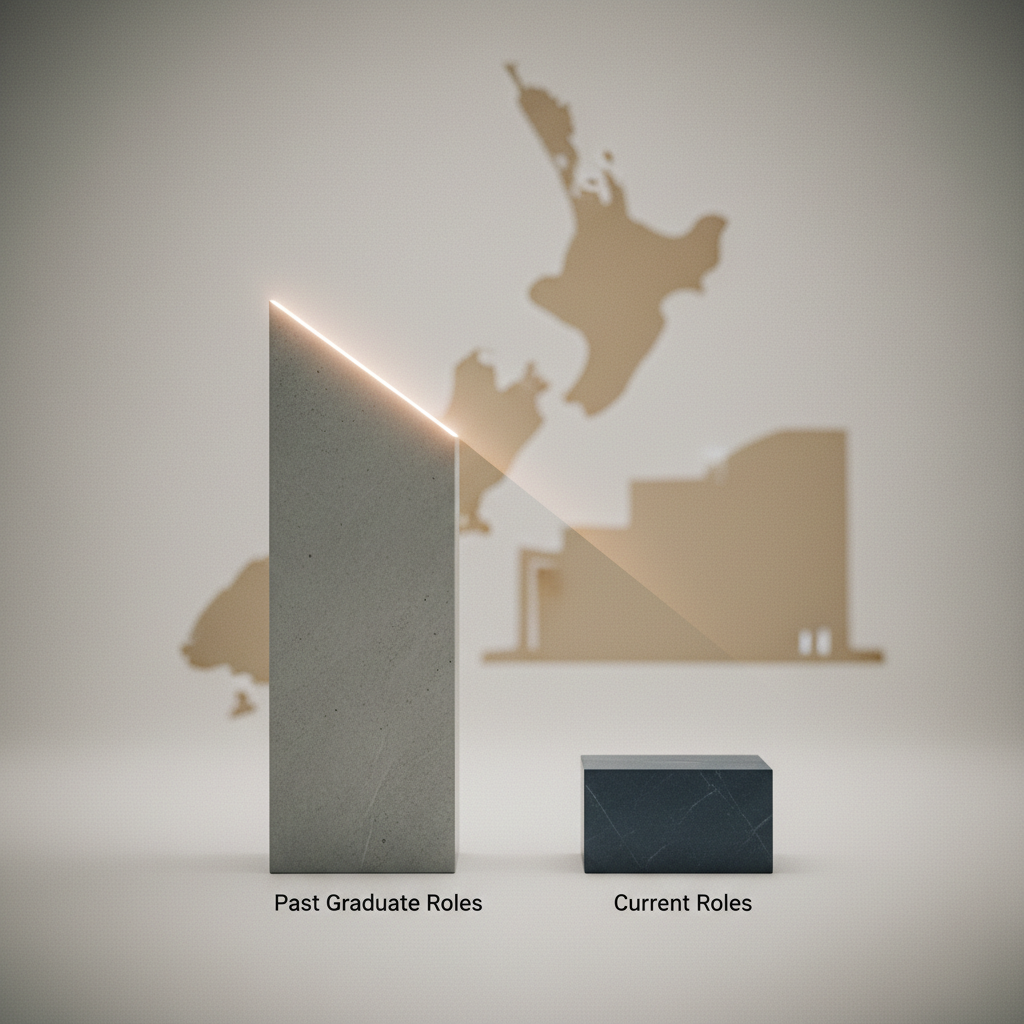

Public Sector Graduate Cuts Signal Wider Talent Market Shifts for NZ Marketers

AI & CommercePolitics

Social Media Addiction Verdicts Signal New Era of Platform Accountability

AI & CommercePolitics

Free Speech Union's Regulatory Scrutiny Signals Evolving Content Landscape for NZ Marketers

AI & CommercePolitics

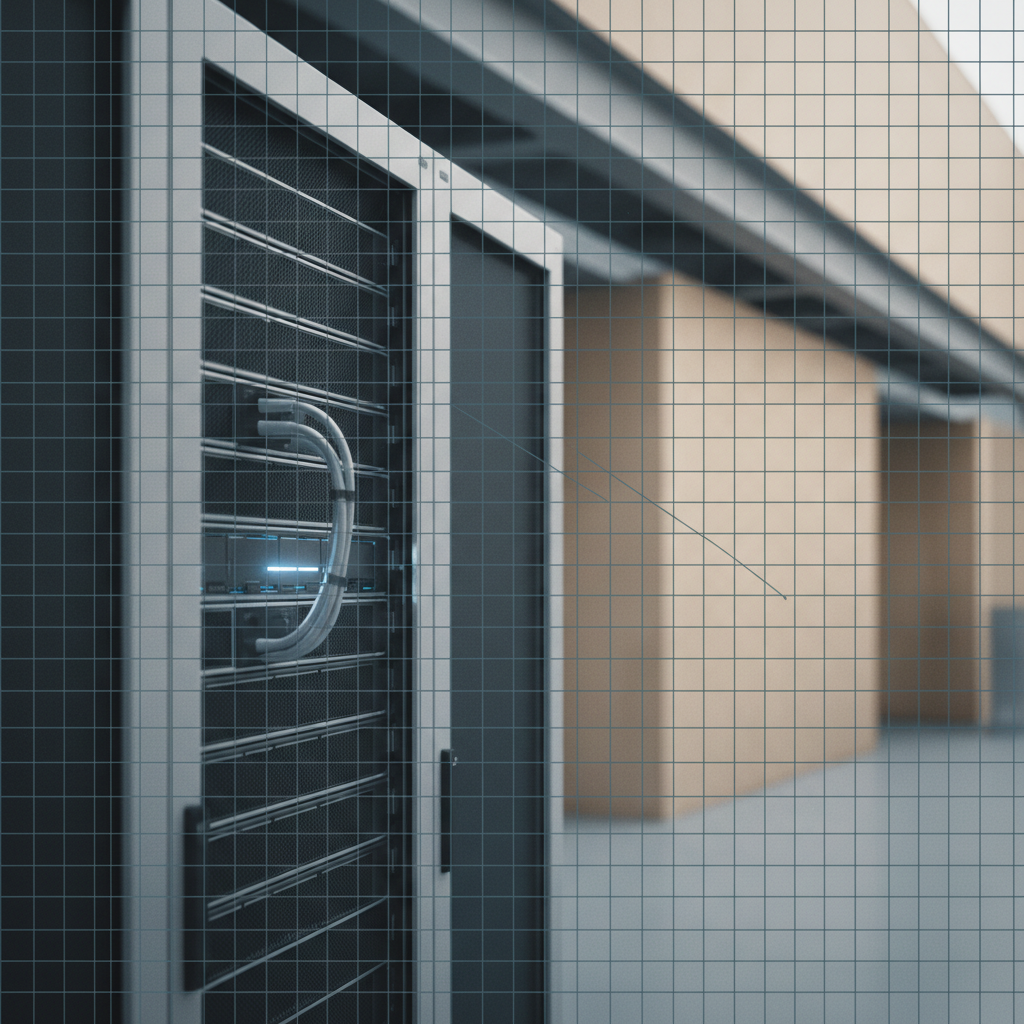

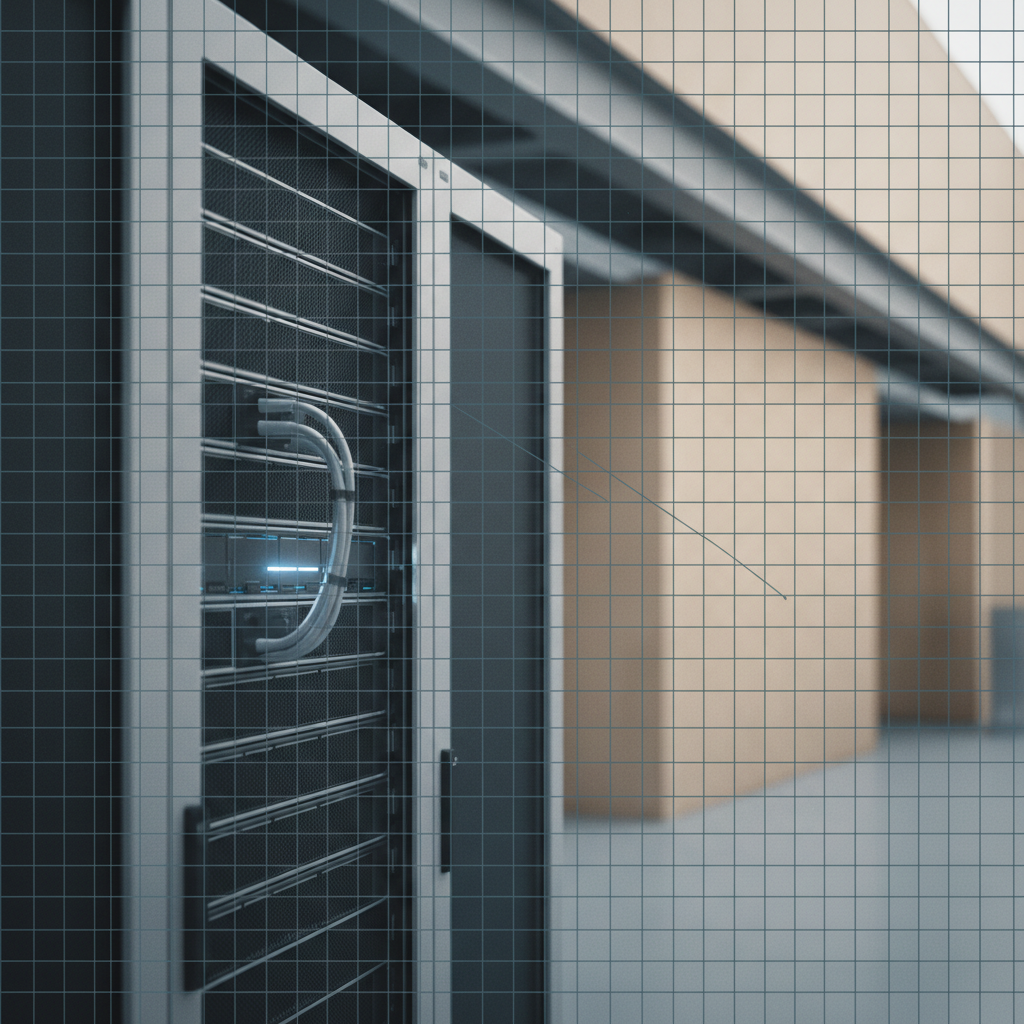

US Senators Demand Transparency on Data Centre Energy Consumption

AI & CommercePolitics